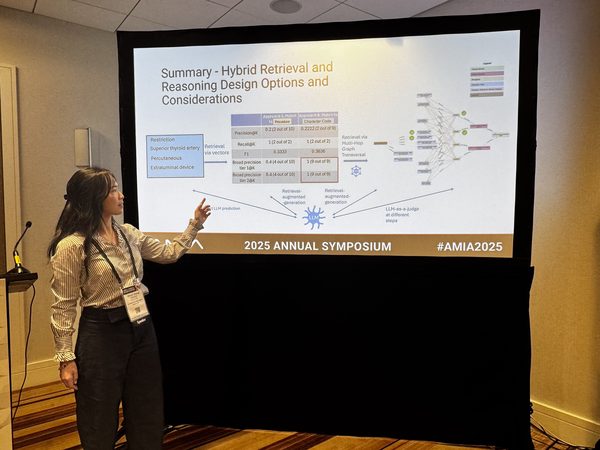

LLMs are powerful, but in high-stakes areas like healthcare, sounding right isn’t the same as being right —

and even when AI can explain itself, that doesn’t mean experts can verify it.

🔍

When Retrieval Misleads

The model finds a concept that looks like a match based on word overlap, but means something clinically different. Keyword similarity masks semantic divergence.

🌀

Semantic Drift

The answer starts in the right clinical territory but gradually shifts meaning through plausible-sounding steps — ending somewhere subtly wrong.

Read my case study →

💭

Hallucination

The model generates clinical codes, classifications, or relationships that don't exist. Without a formal knowledge base to check against, fabrications are invisible.

🧩

The Explainability Gap

AI can show a reasoning path, but domain experts need to verify, explore, compare, and generate hypotheses — not just read explanations. That’s what it takes to keep the AI accountable.

⚖️

Logical Rule Misapplication

Even when what the system reasons over is correct — the knowledge holds, the source is right, the concept didn’t drift — how it reasons over that knowledge still cannot be compromised.

Clinical medicine’s conditional logic is among the densest of any domain:

- IF / AND / OR

- UNLESS / EXCEPT

- Satisfying more than N out of M criteria

When a clinical AI cannot hold that logic, it doesn’t fail — it shortcuts.